Medical Device Cybersecurity Testing: Methods, Requirements and Regulatory Compliance

Table of Contents

Medical Device Cybersecurity Testing: Methods, Requirements and Regulatory Compliance

Medical device cybersecurity testing has moved from a recommended best practice to a mandatory regulatory requirement. Both the FDA and EU MDR now explicitly require manufacturers to demonstrate, through documented testing evidence, that their devices are adequately protected against cybersecurity threats throughout the entire product lifecycle — from initial design through post-market surveillance.

This article provides a comprehensive guide to medical device cybersecurity testing: what it is, which testing methods are required, how regulatory frameworks in the US and EU define testing expectations, and how to build a cybersecurity testing program that is both technically rigorous and audit-ready. It is part of our ongoing series on medical device software regulation, alongside our articles on IEC 62304, SOUP management, and IEC 81001-5-1.

Why Cybersecurity Testing Is Now Mandatory for Medical Devices

The regulatory landscape for medical device cybersecurity has changed fundamentally in recent years. Both the FDA and EU MDR now make cybersecurity testing a mandatory element of the regulatory pathway. The FDA’s guidance on Cybersecurity in Medical Devices states that “security testing documentation and any associated reports or assessments should be submitted in the premarket submission.” The EU’s MDCG 2019-16 guidance similarly states that “the primary means of security verification and validation is testing. Methods can include security feature testing, fuzz testing, vulnerability scanning and penetration testing.”

This regulatory convergence reflects a broader recognition that cybersecurity failures in medical devices are not merely an IT inconvenience — they are a direct patient safety risk. Ransomware attacks that disable hospital systems, unauthorized access to implantable device programming interfaces, and data manipulation in diagnostic software have all demonstrated that an inadequately secured medical device can harm or kill patients just as surely as a mechanical failure.

Key regulatory expectations from both the FDA and EU MDR include comprehensive risk management documentation including threat analysis of assets, vulnerabilities, and threats, and “state of the art” security controls to mitigate risks. Cybersecurity testing is the primary mechanism through which manufacturers demonstrate that these controls actually work.

COMPLETE CATALOG

Find the documentation you need — instantly.

Whether you need a complete kit or just one specific SOP, our catalog has it. 45 process packages and 3 complete bundles, all instantly downloadable and fully editable.

- ✓Complete bundles or individual packages

- ✓45 process packages from €69 each

- ✓ISO 13485 · MDSAP · Combined Kit

The Regulatory Framework for Medical Device Cybersecurity Testing

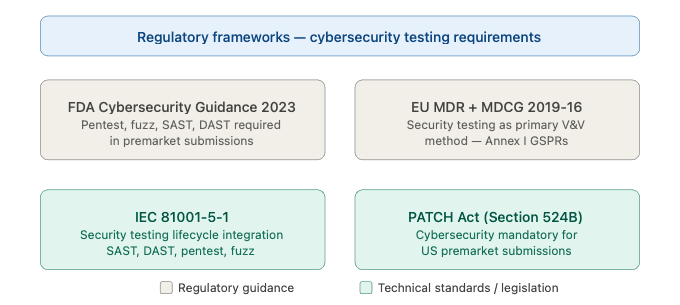

Understanding the regulatory context is essential before designing a cybersecurity testing program. The key frameworks that define testing expectations are:

FDA Cybersecurity Guidance (2026): The FDA guidance requires verification and validation testing including known vulnerability assessments, malware testing, fuzz testing, and structured penetration testing. Static code analysis and binary analysis are also strongly encouraged. Documentation in premarket submissions must detail how cybersecurity risks are identified, managed, and mitigated, with clear lifecycle management plans for post-market updates, security patches, and ongoing threat monitoring.

EU MDR 2017/745 and MDCG 2019-16: The General Safety and Performance Requirements in Annex I of the EU MDR specifically address cybersecurity. GSPR clause 17.2 requires that devices be designed to reduce IT security threats, clause 17.4 requires that risks related to cyber-attacks be minimized throughout the device lifecycle, and clause 23.4 requires that instructions for use include information about cybersecurity-related safeguards.

IEC 81001-5-1: As discussed in our dedicated article on this standard, IEC 81001-5-1 defines the security testing activities that must be performed as part of the Security Development Lifecycle, including SAST, DAST, penetration testing, and fuzz testing, with full traceability to the threat model and security requirements.

Section 524B of the FD&C Act (the PATCH Act): This US legislation, enacted in 2022, makes cybersecurity a mandatory element of premarket submissions for cyber devices, giving the FDA explicit authority to refuse submissions that lack adequate cybersecurity documentation and testing evidence.

Figure 1 — Regulatory frameworks requiring medical device cybersecurity testing

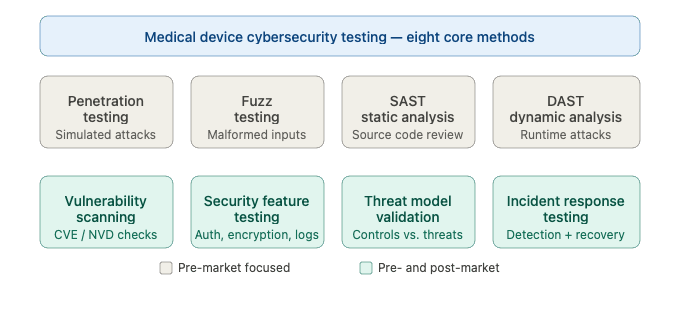

The Eight Core Cybersecurity Testing Methods for Medical Devices

A comprehensive medical device cybersecurity testing program must cover multiple testing modalities, each designed to detect different categories of vulnerabilities. The following are the eight core testing methods required or recommended by FDA, EU MDR, and IEC 81001-5-1.

1. Penetration Testing

Penetration testing — commonly referred to as pen testing — is the most widely recognized and explicitly required form of medical device cybersecurity testing. Penetration testing is a simulated cyber attack to evaluate the security of an application, system, or product, performed by trained security experts known as ethical hackers to simulate an attack from malicious parties. The primary objective is to identify and exploit vulnerabilities that could compromise the confidentiality, integrity, or availability of the system before a malicious party can do so, enabling the developer or manufacturer to identify and develop appropriate security controls to reduce risk.

For medical devices, penetration testing presents unique challenges compared to standard IT penetration testing. The scope must cover not only software interfaces but also hardware attack vectors, firmware, wireless communication protocols, and any cloud-based components. Penetration testing for medical devices must be rigorous, repeatable, and evidence-based. The FDA has raised concerns about penetration testing performed more than a year prior to submission and in some cases has required a new pen test, underlining the importance of timing the test close to the actual submission date.

A well-structured medical device penetration test follows these phases:

Scope definition: Define precisely which components, interfaces, communication channels, and attack vectors are within scope. Document assumptions and exclusions.

Reconnaissance: Gather information about the device’s architecture, network interfaces, operating system, software components, and any publicly available information that an attacker might exploit.

Vulnerability identification: Systematically scan and analyze the target for known vulnerabilities, misconfigurations, and weak points.

Exploitation: Attempt to exploit identified vulnerabilities to demonstrate their real-world impact. This phase provides the most compelling evidence of actual risk.

Post-exploitation: Assess the potential impact of a successful attack — what data could be accessed, what device functions could be manipulated, what downstream harm could result.

Reporting: Document all findings, exploitation evidence, and remediation recommendations in a format suitable for regulatory submission.

2. Fuzz Testing

Fuzz testing — or fuzzing — is a dynamic testing methodology that involves providing random, malformed, or unexpected inputs to a software system to identify how it behaves under unexpected conditions. Fuzz testing is a dynamic software testing methodology used to uncover vulnerabilities and defects in software applications. Fuzzing involves providing random, unexpected, or malformed inputs to a software application to observe how it behaves under such conditions. The key difference from unit testing is that fuzz testing is non-deterministic or exploratory, meaning that the expected outcome is not necessarily known in advance, making it useful for uncovering bugs and security vulnerabilities that may not have been identified through other testing methods.

The FDA specifically proposes fuzz testing as a vulnerability testing method to test and analyze cases of misuse or abuse and malformed and unexpected inputs. Fuzz testing is particularly valuable for medical devices because it can uncover memory corruption vulnerabilities, crash conditions, and protocol handling errors that are commonly exploited in real-world attacks but are difficult to identify through manual testing alone.

3. Static Application Security Testing (SAST)

SAST involves automated analysis of source code, bytecode, or binary code to identify security vulnerabilities without executing the application. It is typically integrated into the development environment and can be run continuously as part of a CI/CD pipeline.

For medical devices, SAST is used to identify issues such as hardcoded credentials, insecure cryptographic implementations, buffer overflows, injection vulnerabilities, and insecure API calls. The FDA specifically lists static and dynamic code analysis, including testing for credentials that are “hardcoded,” default, easily guessed, and easily compromised, as required elements of cybersecurity testing documentation in premarket submissions.

4. Dynamic Application Security Testing (DAST)

DAST tests the running application by simulating external attacks against its interfaces — web services, APIs, network ports, and user interfaces — without access to the source code. Unlike SAST, DAST identifies vulnerabilities that only manifest at runtime, such as authentication bypass, session management flaws, and injection attacks that pass static analysis but are exploitable in execution.

For medical devices, DAST is particularly important for any device with a web-based user interface, a REST or SOAP API, or a mobile companion application, as these interfaces represent the most exposed attack surface.

5. Vulnerability Scanning and Assessment

Vulnerability scanning involves automated tools that compare the device’s software components, operating system, and network services against databases of known vulnerabilities — primarily the CVE (Common Vulnerabilities and Exposures) database and the NVD (National Vulnerability Database). This directly interfaces with SOUP management obligations under IEC 62304, as the vulnerability scan effectively validates the anomaly evaluation required for each SOUP component.

Vulnerability scanning should be performed both during development and as a continuous post-market activity, with results documented and evaluated against the device’s threat model and risk profile.

6. Security Feature Testing

Security feature testing verifies that specific security controls implemented in the device function correctly as designed. Security feature testing ensures that features like access controls, encryption, and authentication function as intended. This includes testing authentication mechanisms (including multi-factor authentication where implemented), authorization and role-based access control, session management and timeout behavior, data encryption at rest and in transit, audit logging completeness and integrity, and secure update mechanisms.

Unlike penetration testing — which looks for unexpected vulnerabilities — security feature testing verifies that documented security requirements have been correctly implemented.

7. Threat Model Validation Testing

The threat model developed during the design phase defines the attack vectors, potential adversaries, and security assumptions of the device. Threat model validation testing verifies that the security controls identified in the threat model actually mitigate the identified threats as intended. The FDA requires evidence of testing threat models that demonstrates effective risk control measures provided in the system and use case.

This form of testing creates a direct traceability link between the threat model, the security requirements, and the test results — a chain of evidence that is essential for regulatory submissions.

8. Incident Response and Recovery Testing

Incident response testing evaluates the organization’s ability to detect, contain, and recover from a cybersecurity incident affecting the medical device. While primarily a process-level activity rather than a technical test of the device itself, it is increasingly required by regulators as evidence that the manufacturer has a credible plan for managing post-market cybersecurity events.

Figure 2 — The eight core cybersecurity testing methods for medical devices

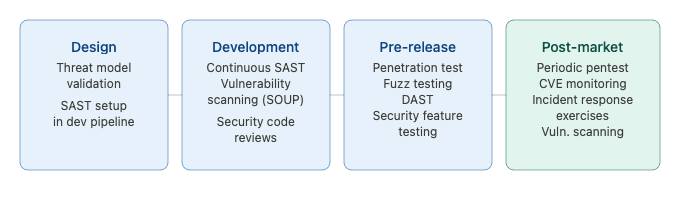

When to Perform Cybersecurity Testing — The Lifecycle Approach

One of the most critical — and most frequently misunderstood — aspects of medical device cybersecurity testing is timing. Many manufacturers treat cybersecurity testing as a pre-submission activity performed once at the end of development. Regulators are explicitly pushing back against this approach.

External penetration testing is recommended to avoid discussions about the independence of the testers. At minimum, the final software configuration should be tested. Smaller pen tests during development are also recommended, as vulnerabilities are almost always found during the process.

The correct approach integrates cybersecurity testing throughout the entire software lifecycle:

During design: Threat model validation testing verifies that the security architecture addresses identified threats before significant development investment has been made. Early-stage SAST can be integrated into the development environment from the first line of code.

During development: Continuous SAST as part of CI/CD pipelines, regular vulnerability scanning of SOUP components as new dependencies are added, and security code reviews integrated into the pull request process.

Before release: A comprehensive pre-release testing cycle including penetration testing, fuzz testing, DAST, and full security feature testing against the final software configuration. The FDA has raised concerns about penetration testing performed more than a year prior to submission, and in some cases has required a new pen test. This means the final penetration test should be performed on the release candidate build, as close to the submission date as practical.

Post-market: Continuous vulnerability scanning, periodic penetration testing at defined intervals, and incident response exercises. Regular interval cybersecurity testing is performed at regular intervals to identify potential vulnerabilities before exploitation.

Building a Cybersecurity Testing Program — Practical Steps

Step 1 — Define the Test Scope Based on the Threat Model

Every cybersecurity testing program must begin with the threat model. The threat model identifies the attack surface — all the interfaces, components, and data flows that could be targeted by an adversary. The test scope must cover the full attack surface defined in the threat model, including hardware interfaces, software APIs, wireless protocols, web and mobile interfaces, cloud components, and update mechanisms.

Gaps between the threat model scope and the test scope are one of the most common findings in regulatory reviews of cybersecurity documentation.

Step 2 — Select Testing Methods Based on Risk

Not every testing method needs to be applied at the same depth to every component. Apply a risk-based approach: components that handle sensitive patient data, that control device functionality, or that are internet-facing deserve the most rigorous testing coverage. Components with limited connectivity and no safety-critical functions may require less intensive testing — but all must be covered to some degree.

Step 3 — Ensure Tester Independence and Competence

It is crucial that any report from a penetration test include details regarding the skills and credentials of the testers, the equipment and methods they use, to ensure that the manufacturer and regulator understand the appropriateness of testing applied and of the skills of the tester. Inappropriate application of testing can be hard to identify and can lead to regulatory risks. Medcrypt

For penetration testing in particular, regulatory bodies expect testing to be conducted by qualified security professionals with demonstrated expertise in the specific technology domain of the device. Internal testing is generally acceptable but external independent testing is preferred and increasingly expected for Class II and Class III devices.

Step 4 — Document Everything with Regulatory Submission in Mind

Cybersecurity testing documentation for regulatory submission must include the test plan (scope, methods, tools, acceptance criteria), the test report (detailed findings, exploitation evidence, severity ratings), the remediation record (how each finding was addressed), and the residual risk evaluation (why any unresolved findings do not constitute unacceptable risk).

Findings must be traceable to the threat model and risk assessment — regulators expect to see a closed loop from identified threat to implemented control to verified effectiveness.

Step 5 — Establish a Post-Market Testing Schedule

Define a documented schedule for repeating cybersecurity testing after product release. At minimum, penetration testing should be repeated whenever significant changes are made to the device software, when new significant vulnerabilities are disclosed in SOUP components, and at defined periodic intervals — typically annually for high-risk devices.

Figure 3 — Cybersecurity testing program — lifecycle integration

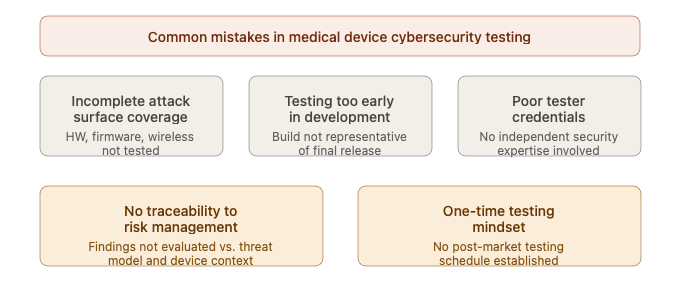

Common Mistakes in Medical Device Cybersecurity Testing

Understanding where manufacturers typically fall short is as valuable as knowing what to do. These are the most common failures identified during FDA reviews and notified body audits of cybersecurity testing documentation.

Testing only the software, not the full attack surface: Medical device cybersecurity encompasses far more than the application software. Hardware debug ports, firmware update mechanisms, Bluetooth and Wi-Fi interfaces, USB connections, and any cloud backend components are all part of the attack surface and must all be included in the test scope.

Performing testing too early in development: Testing a pre-release build and then making significant software changes before submission is a common trap. The penetration test must be performed on a build that is representative of the final released product. The FDA has raised concerns about penetration testing performed more than a year prior to submission. Medcrypt

Inadequate tester credentials and independence: Using internal developers to conduct penetration testing without independent security expertise is unlikely to satisfy regulatory expectations for high-risk devices. The test report must document tester qualifications clearly.

No traceability from test results to risk management: Test findings must be evaluated in the context of the device’s threat model and risk assessment. A finding that is dismissed as “low severity” by a generic CVSS score may actually represent a critical risk in the specific clinical context of the device. Every finding must be evaluated against the device’s specific use case, not just its generic severity rating.

Treating testing as a one-time pre-submission activity: Post-market cybersecurity surveillance requires ongoing testing. Manufacturers who have no plan for post-release security testing will struggle to comply with both FDA post-market guidance and EU MDR post-market surveillance obligations.

Figure 4 — Common mistakes in medical device cybersecurity testing

Cybersecurity Testing Documentation for Regulatory Submissions

The quality of cybersecurity testing documentation is as important as the quality of the testing itself. Regulators cannot evaluate what they cannot read — a rigorous penetration test with poor documentation is treated the same as inadequate testing.

COMPLETE CATALOG

Find the documentation you need — instantly.

Whether you need a complete kit or just one specific SOP, our catalog has it. 45 process packages and 3 complete bundles, all instantly downloadable and fully editable.

- ✓Complete bundles or individual packages

- ✓45 process packages from €69 each

- ✓ISO 13485 · MDSAP · Combined Kit

For FDA premarket submissions, the cybersecurity testing documentation package typically includes a cybersecurity testing plan describing the scope, methodology, tools, and acceptance criteria; individual test reports for each testing method performed; a vulnerability tracking matrix documenting each finding, its severity rating, the remediation applied, and the residual risk evaluation; and a cybersecurity risk management summary demonstrating traceability from identified threats through implemented controls to verified test results.

For EU MDR technical file submissions, the same level of documentation is expected, organized within the overall risk management file and software documentation structure as defined by IEC 62304 and IEC 81001-5-1.

One practical point worth emphasizing: the cybersecurity testing documentation must be written for a regulatory reviewer who may not be a cybersecurity expert. Findings must be explained in terms of patient safety impact, not just technical severity. A finding that sounds benign in cybersecurity terms — such as information disclosure through verbose error messages — must be explained in terms of what an attacker could do with that information in the specific context of the medical device and its clinical environment.

Conclusions

Medical device cybersecurity testing is no longer optional — it is a mandatory, multi-method, lifecycle-spanning activity required by every major regulatory authority worldwide. Manufacturers who approach it as a pre-submission checkbox will face regulatory rejections, delayed market access, and — most importantly — avoidable patient safety risks.

A well-designed cybersecurity testing program covers the full attack surface of the device, applies the right combination of testing methods at the right lifecycle phases, documents findings with regulatory submission quality, and continues after product release through a structured post-market surveillance process. The eight testing methods described in this article — penetration testing, fuzz testing, SAST, DAST, vulnerability scanning, security feature testing, threat model validation, and incident response testing — together provide the comprehensive coverage that regulators expect and patients deserve.

Building this capability takes time and investment, but manufacturers who do it properly will benefit from faster regulatory submissions, more confident audit outcomes, and a stronger cybersecurity posture throughout the commercial life of their devices.

This article is part of the MD Regulatory series on medical device software and cybersecurity regulation. Related articles cover IEC 62304 software lifecycle processes, SOUP management, and IEC 81001-5-1 cybersecurity for health software.

2 Comments

Comments are closed.